by Fabio Ciambella, La Sapienza Università di Roma.

The SENS project, being a digital archive, represents an interesting testbed for the analysis of corpora and the implementation of corpus linguistics tools. Albeit acknowledging the high number of tools available, this short guide aims at introducing basic examples of lexical and collocational analysis through the Voyant tools[1] and the #Lancsbox software[2], in order to show the potentials offered by both online tools and software. The examples given in the following paragraphs concern the analysis of the semidiplomatic text of Arthur Brooke’s Romeus and Iuliet, taken as a sample corpus.

Voyant tools

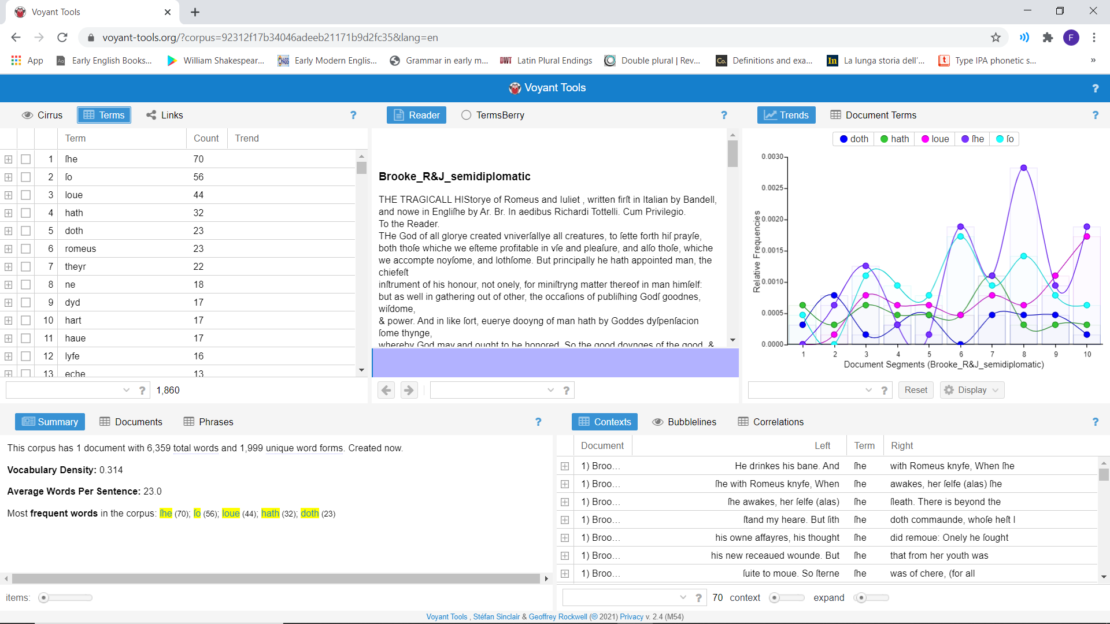

Corpora can be uploaded directly on the Voyant homepage by URL or as text files. Voyant automatically tags the text and presents the corpus analysis on a screen divided into five boxes (Fig.1) containing (1) Keywords, (2) text, (3) Keywords distribution, (4) General word count information, (5) Context for any selected node.

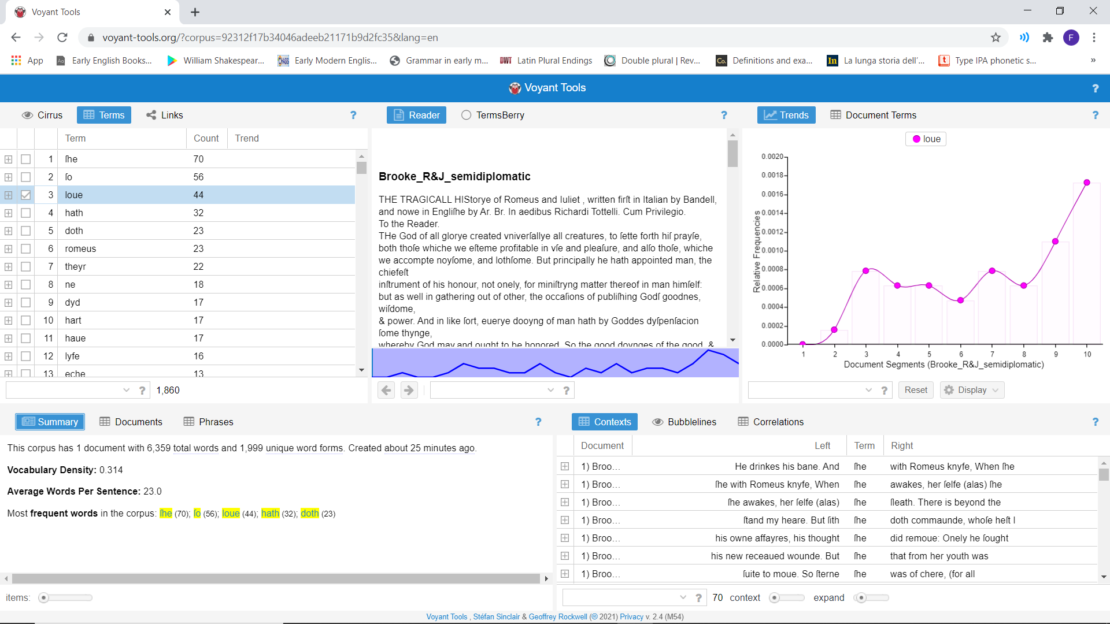

1. The Keywords tool lists the most recurring lexical words in the corpus uploaded; function words are automatically tagged and not included here. By clicking on any of these keywords, boxes (2), (3) and (5) highlight respectively portions of the text where a keyword occurs, its distribution in the corpus and main n-grams it occurs within, and its collocations (Fig. 2).

2. The plain text is shown in the second box with no irregular spaces, nor words in italics or bold. Only the difference between capital and small letters is maintained.

3. The Distribution tool illustrates the trend of selected keywords or a single keyword.

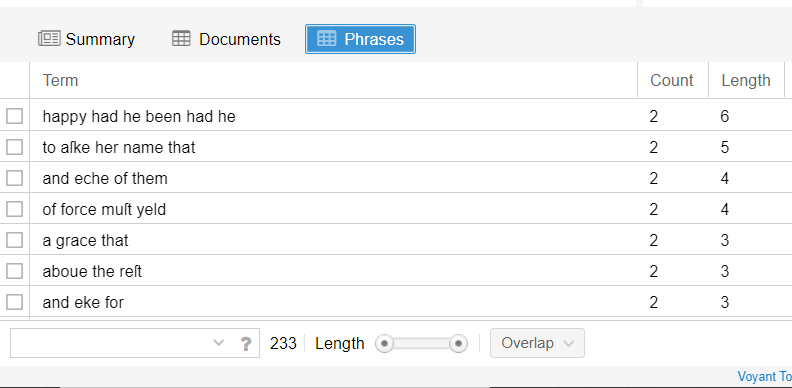

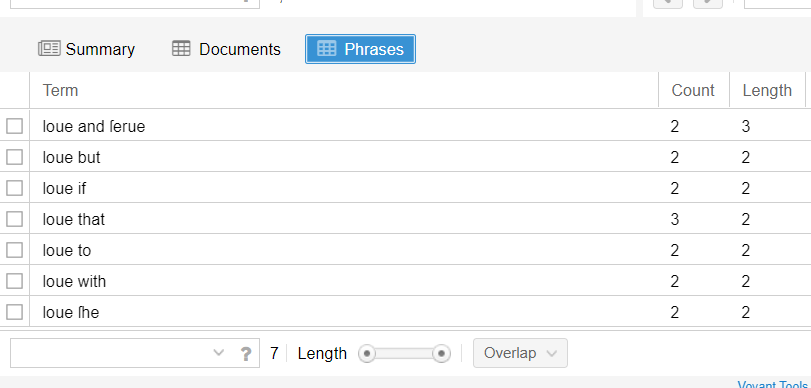

4. General information about word count is reported in the Summary tool, in terms of number of tokens, types, lemmas, TTR, most frequent words, etc. Also, the most recurring n-grams are reported by clicking on the Phrases button (Fig. 3).

5. The Context tool allows any keyword to be inserted in its collocation patterning through the Contexts, Bubblelines and Correlations functions.

#Lancsbox

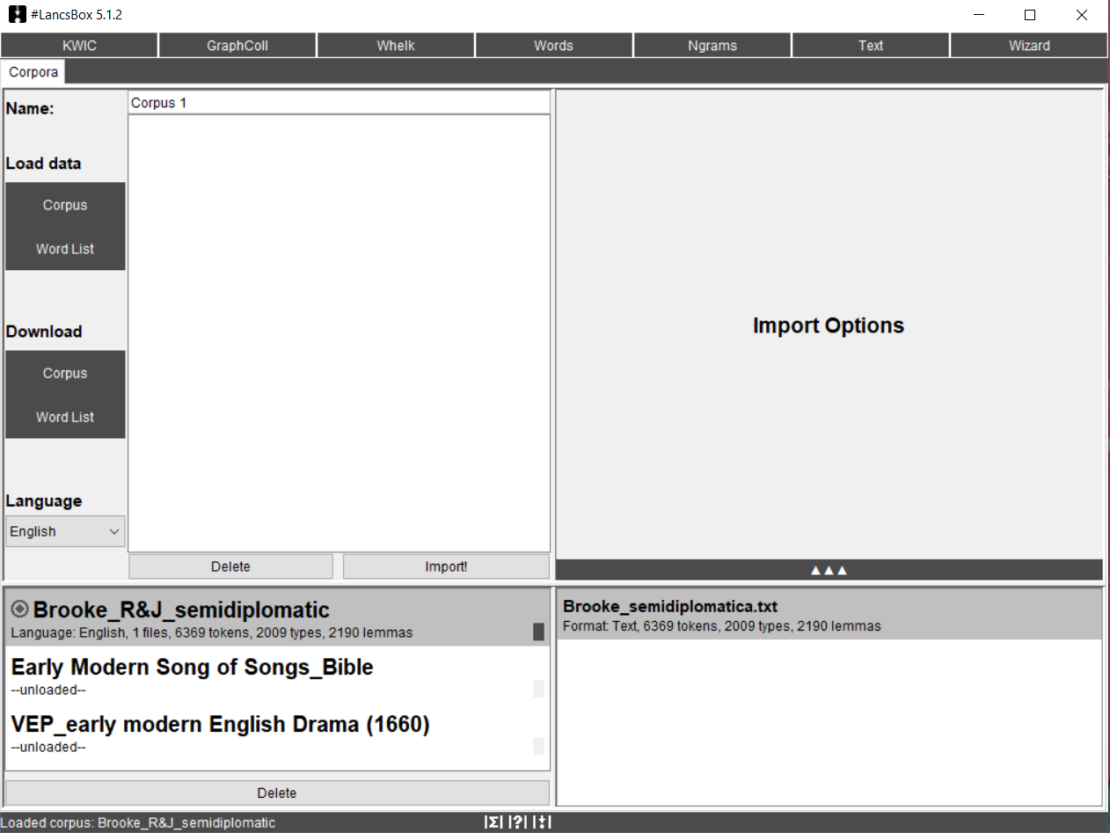

Data can be either loaded from local text files saved in different formats (.txt, .xml, .doc, .docx, .pdf, .odt, .xls, .xlsx, etc.) or downloaded from a database of corpora internal to the software itself (BNC, Brown, LOB, and others). Languages other than English can be selected, which gives users the possibility to make the software tag parts of speech (or PoS) automatically. The screenshot below (Fig. 4) shows #Lancsbox corpora tab with the same example of text file used above. Numbers of tokens, types and lemmas have been already calculated by the software.

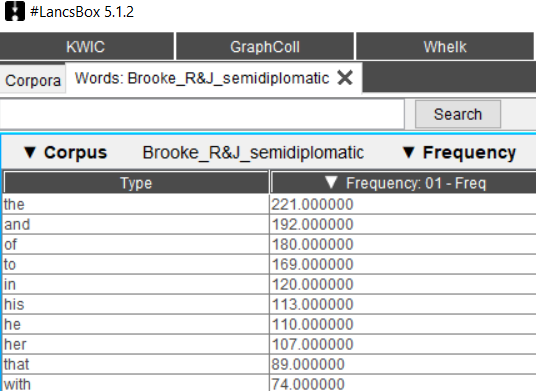

Any effective lexical analysis should start with keywords’ extraction. The Words tool (Fig. 5) allows examination of frequencies of types, lemmas and PoS, and comparison of subcorpora using the keywords technique.

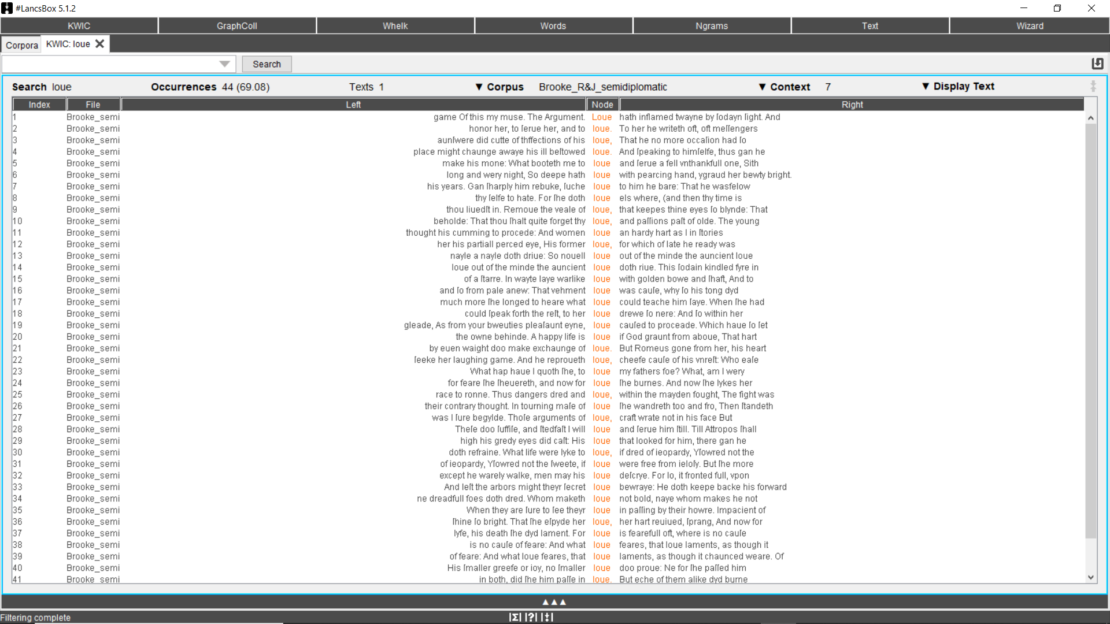

The KWIC (KeyWords In Context) tool generates a list of all left and right collocations of a search term (or node) in a corpus in the form of a concordance (Fig. 6). Occurrences of a search term are indicated right above the results, while the ‘File’ column indicates the text where the keywords appears.

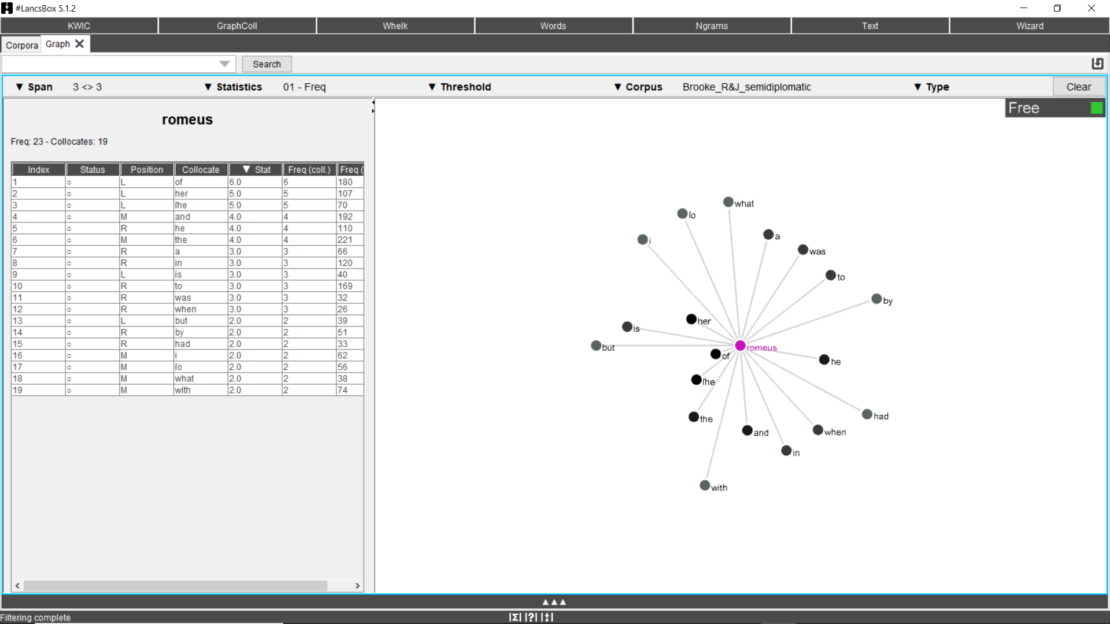

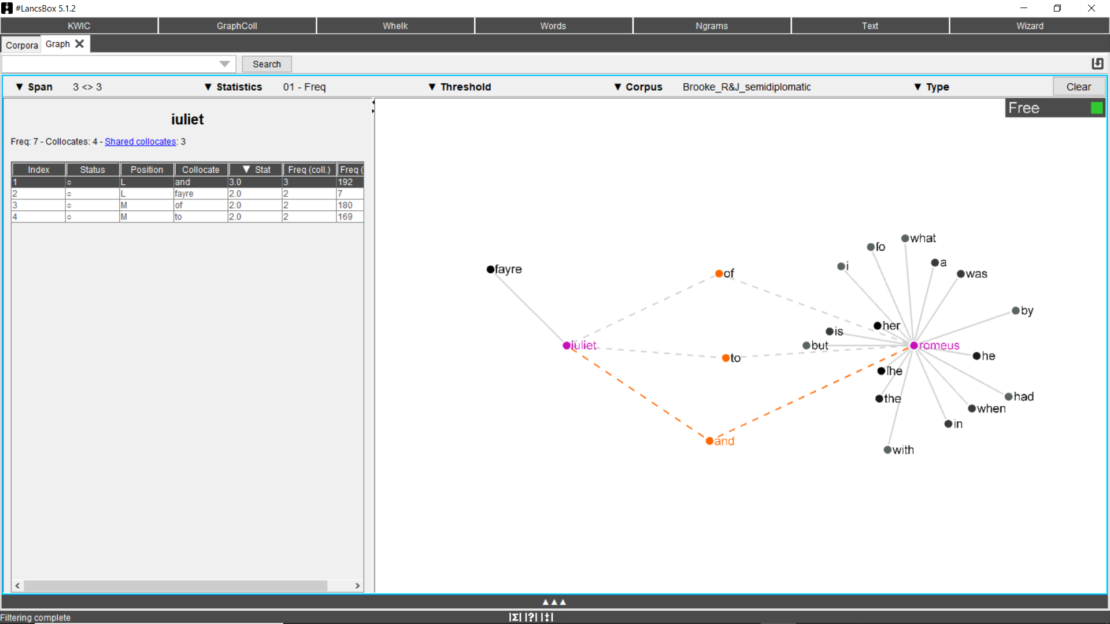

The results provided by the KWIC tool can be filtered and different parameters can be set by the GraphColl tool (Fig. 7). It identifies collocations and displays them in a table and as a collocation graph or network. A span of left/right collocations can be set (generally 3L-3R or 5L-5R) as well as a threshold level regarding the minimum number of occurrences per collocation (from 1.0). The left side of the screen shows the position of the collocates (L, R or M for middle, meaning sometimes on the left and sometimes on the right) and their frequency. The graphic collocational patterning is shown in the right part of the screen. Collocates are allotted according to their collocational position. The closer a collocate is to the node, the higher its frequency of occurrence with the search term.

Results can be cleared, but they can also be maintained and a new term searched, to compare the collocational patterning of more than one node (Fig. 8). The figure below shows the collocations that the search terms “Romeus” and “Iuliet” have in common (called shared collocates). For instance, such information is precious when research about semantic fields is carried out.

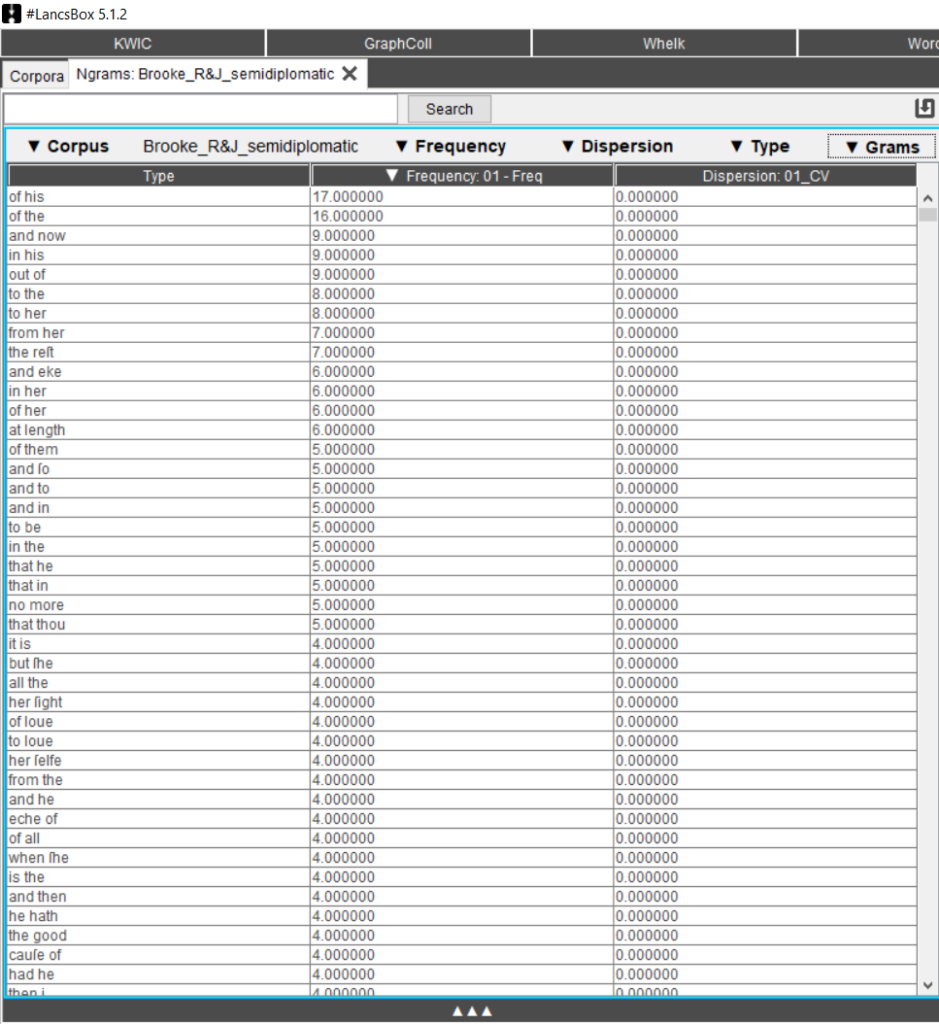

The Ngrams tool allows detailed analyses of frequencies of n-grams, thus focusing more on syntactic structures than morphology and lexis. By clicking on Ngrams, #Lancsbox provides results for 2-grams (the default search function. Fig. 9), but search settings can be adjusted up to 10-grams.

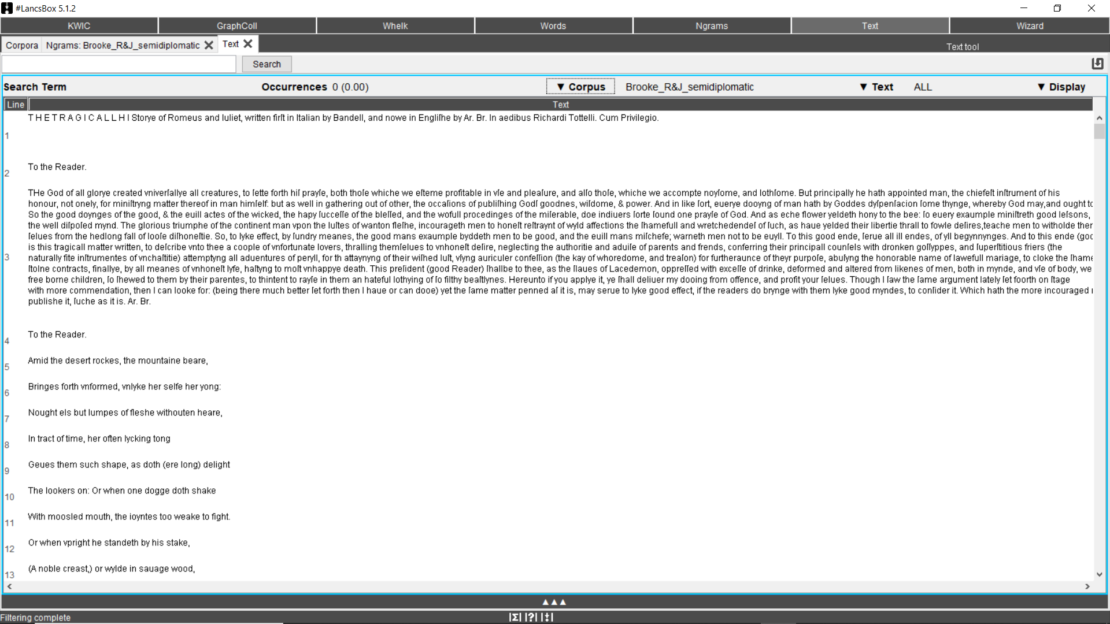

In any moment, it is possible to consult the whole corpus by clicking on the Text tool (Fig. 10).

[1] Voyant tools (https://voyant-tools.org/) was developed by Stéfan Sinclair (McGill University, Montreal, Canada) and Geoffrey Rockwell (University of Alberta, Canada).

[2] #Lancsbox was developed by Vaclav Brezina and Tony McEnery at the University of Lancaster, UK (updated versions of this free software can be downloaded at http://corpora.lancs.ac.uk/lancsbox/)